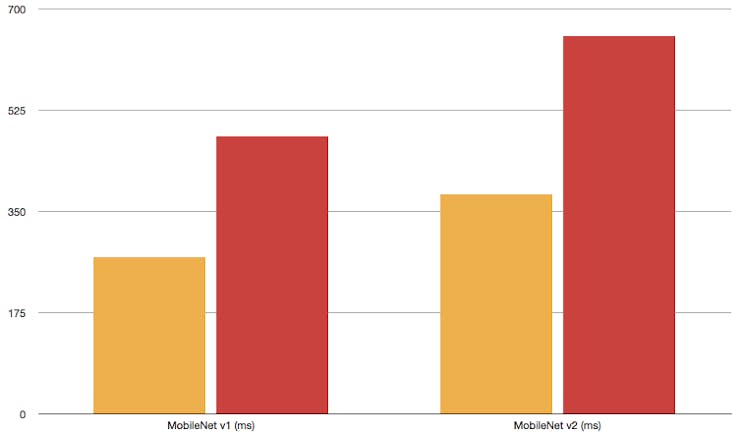

Inference time in ms for network models with standard (S) and grouped... | Download Scientific Diagram

GitHub - dailystudio/tflite-run-inference-with-metadata: This repostiory illustrates three approches of using TensorFlow Lite models with metadata on Android platforms.

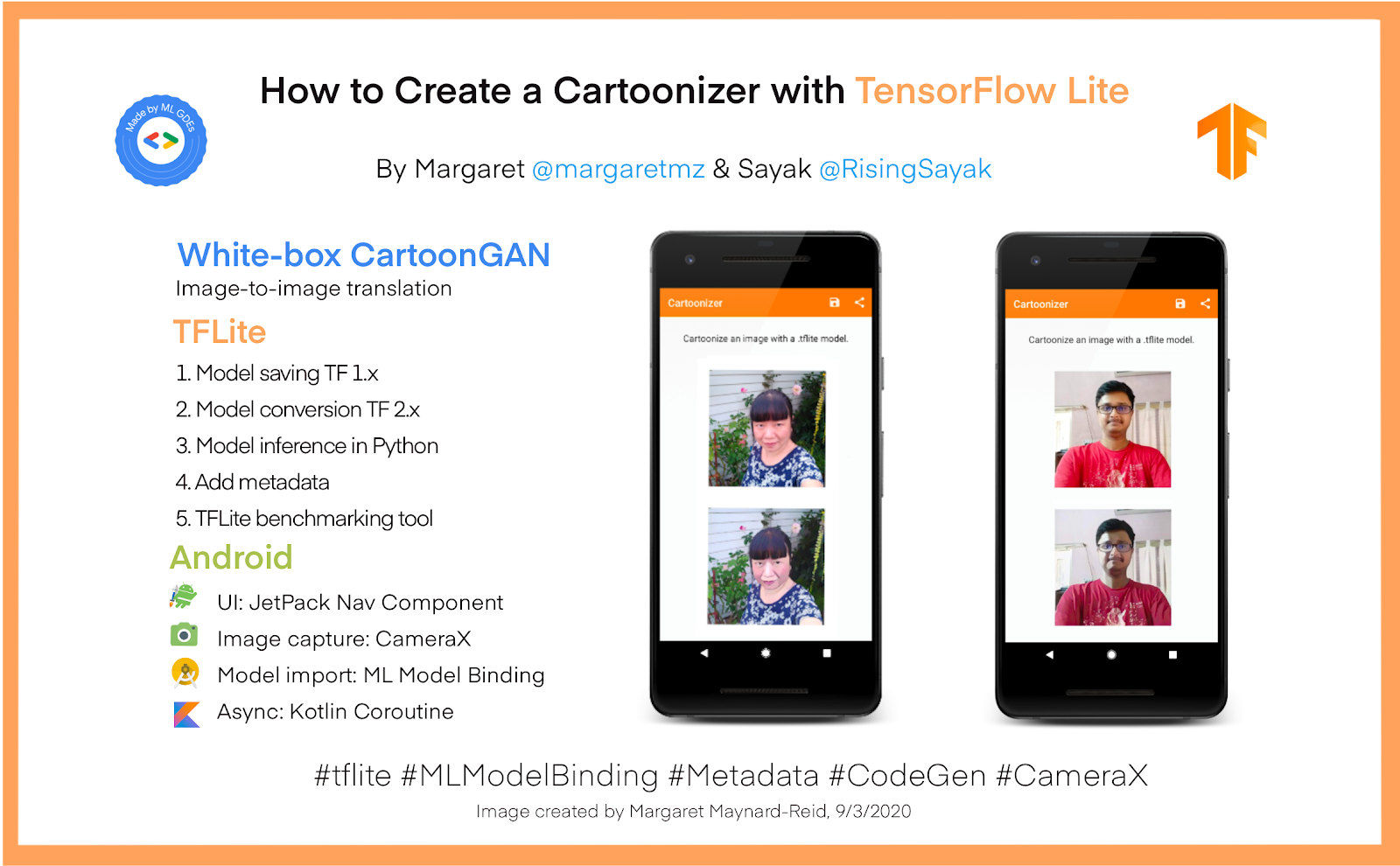

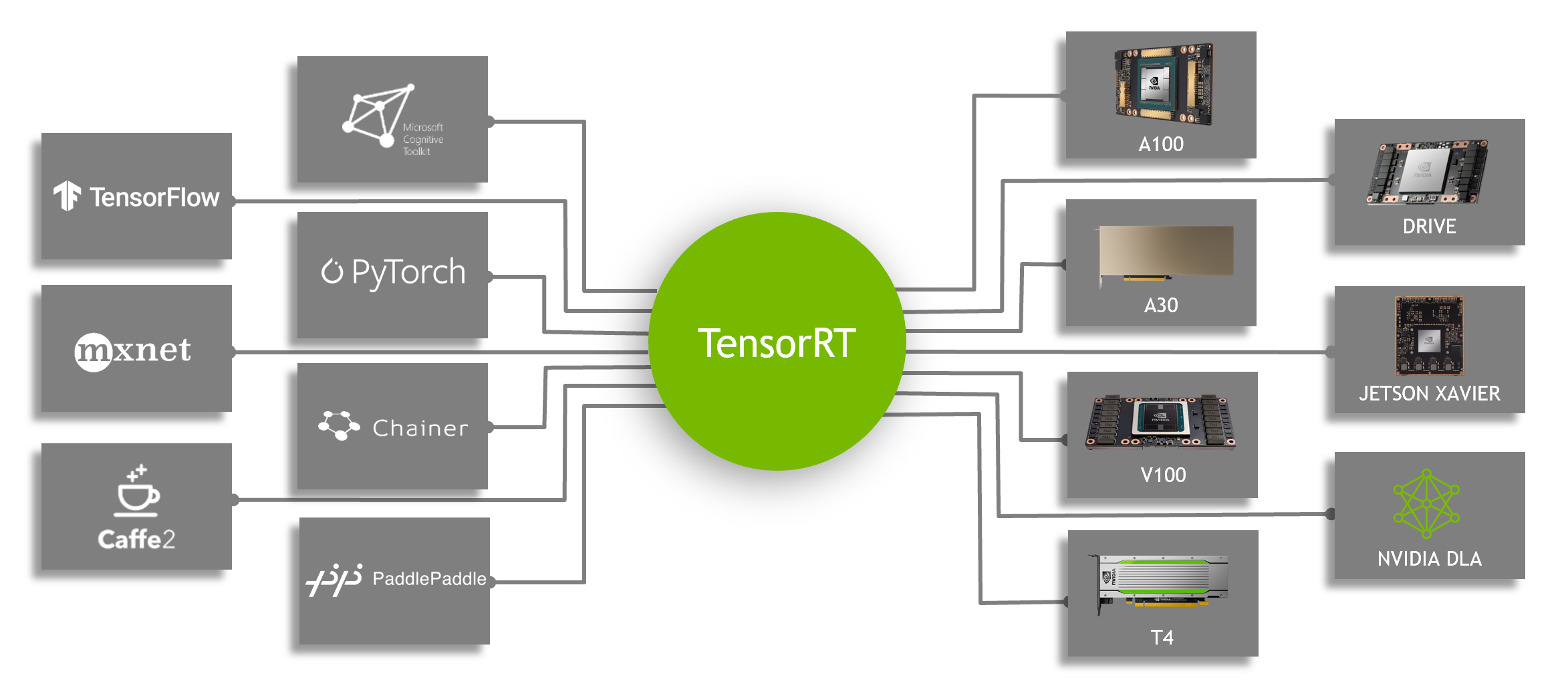

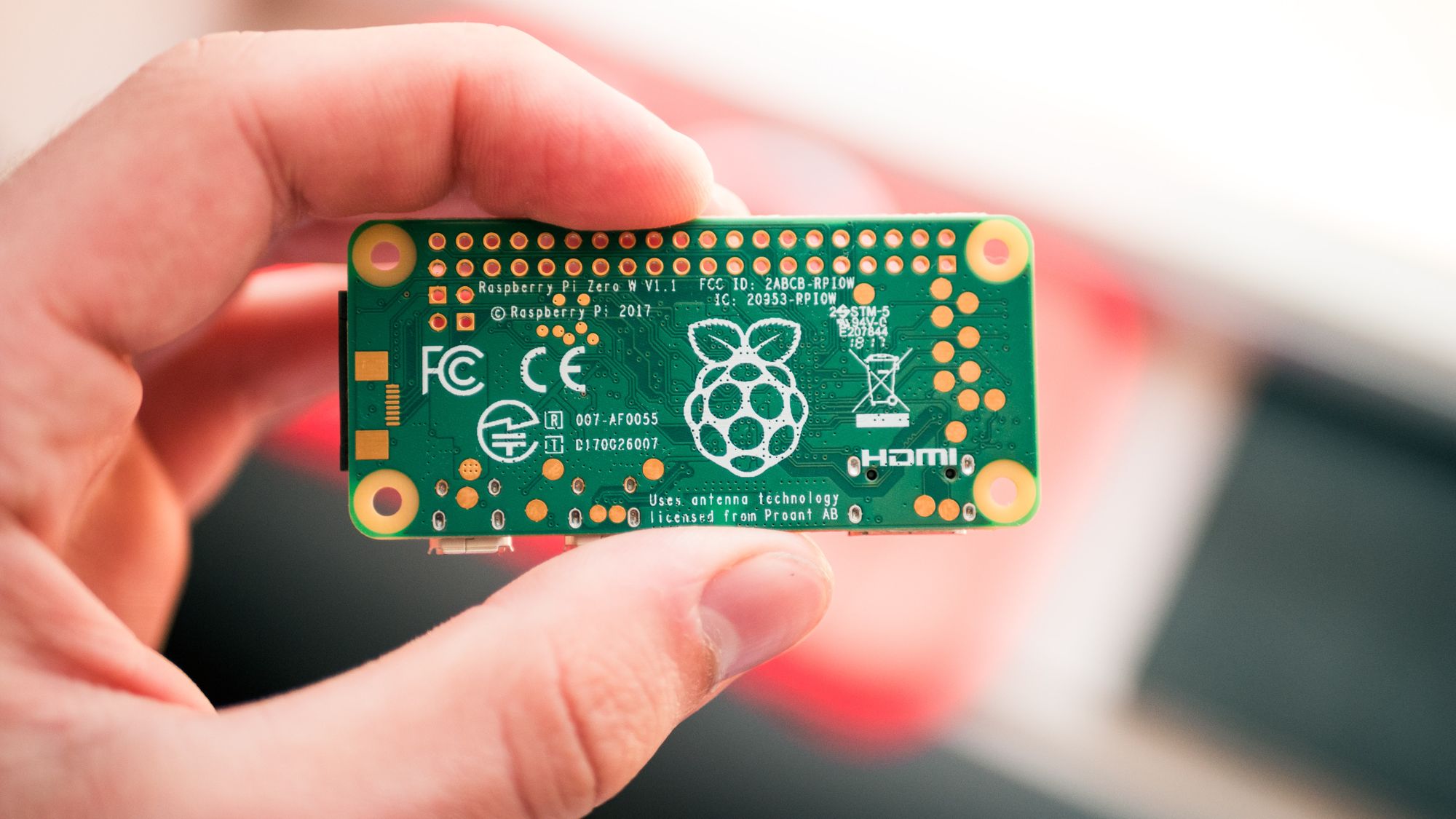

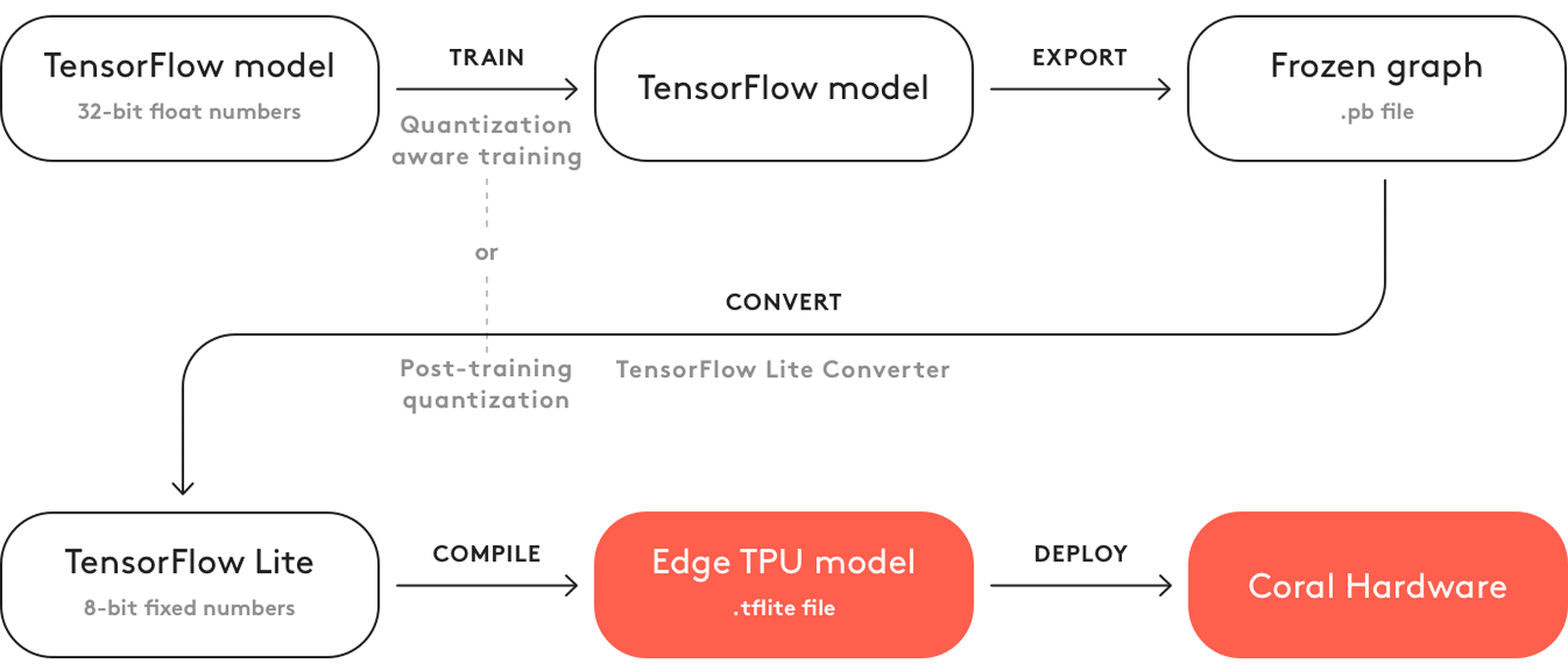

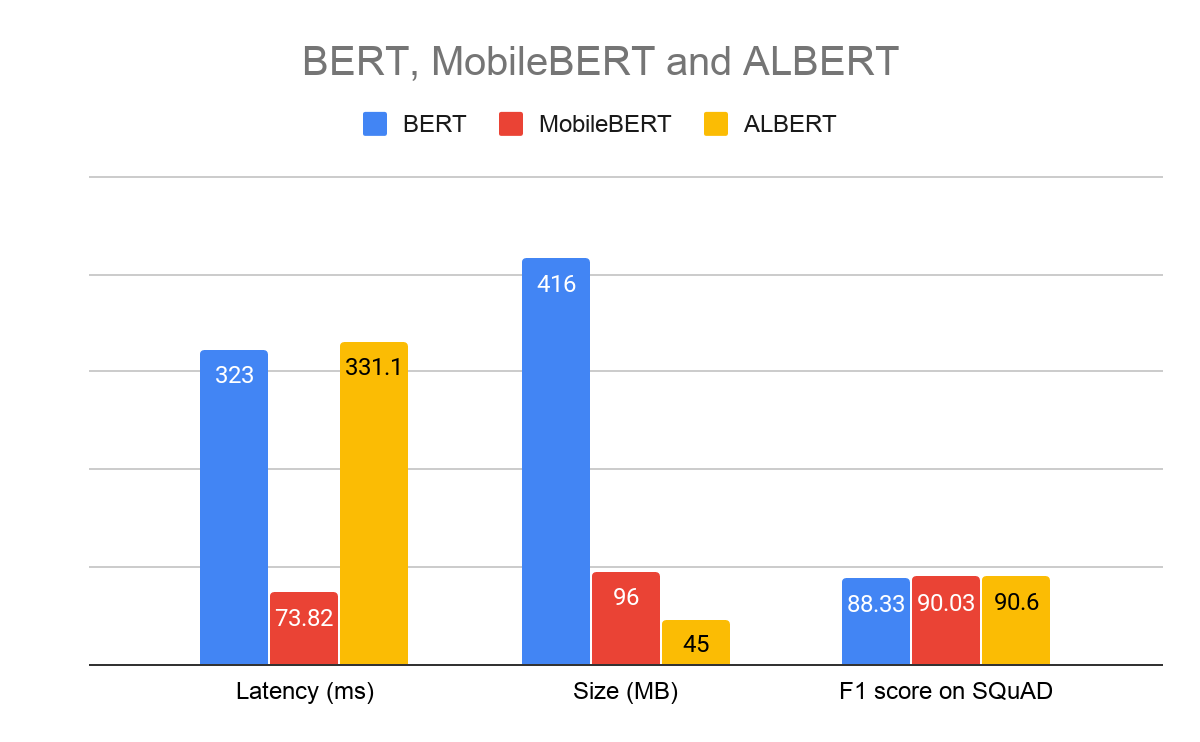

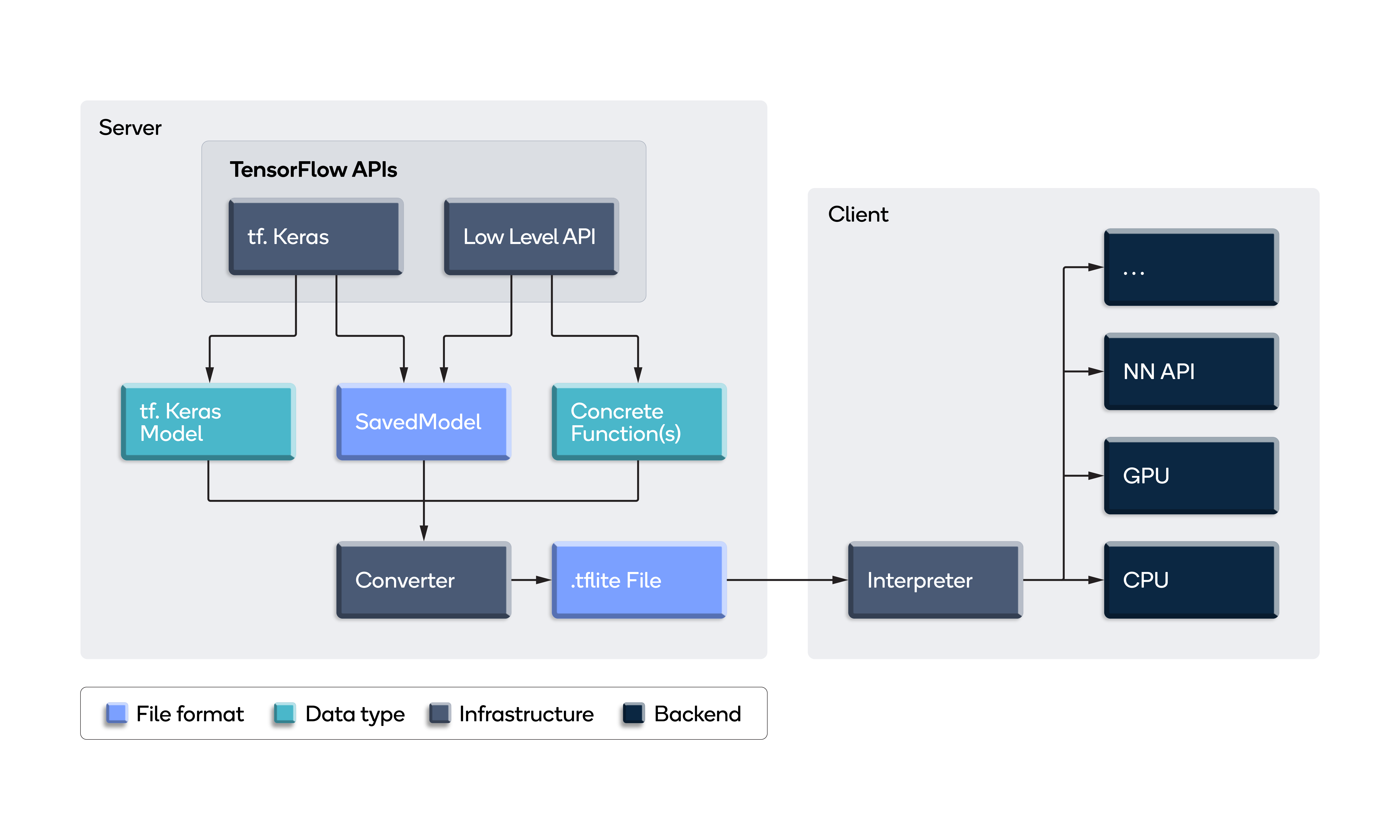

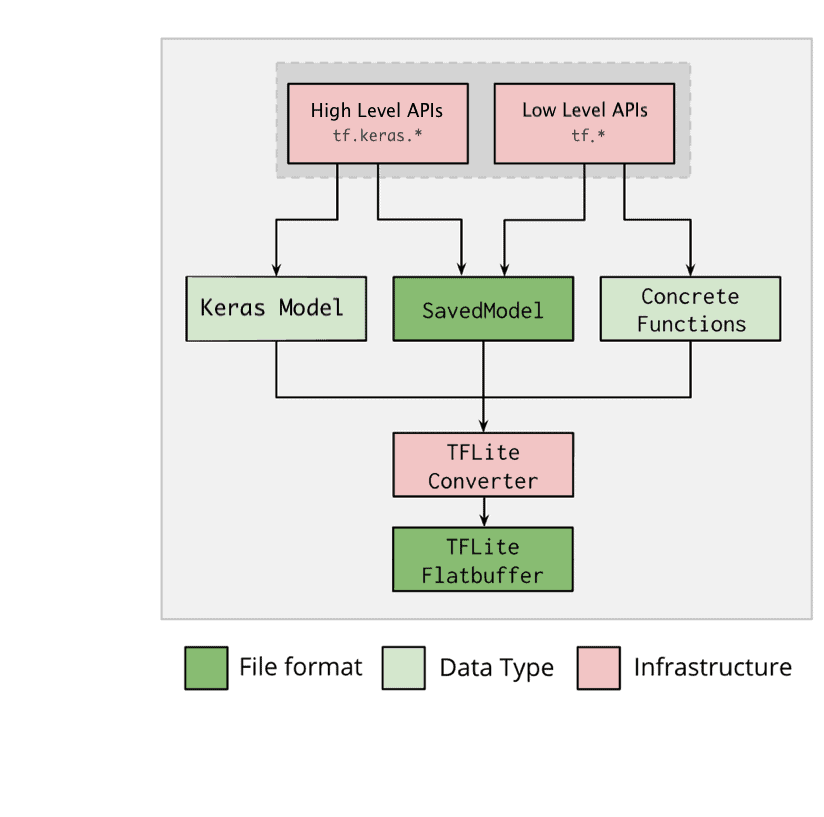

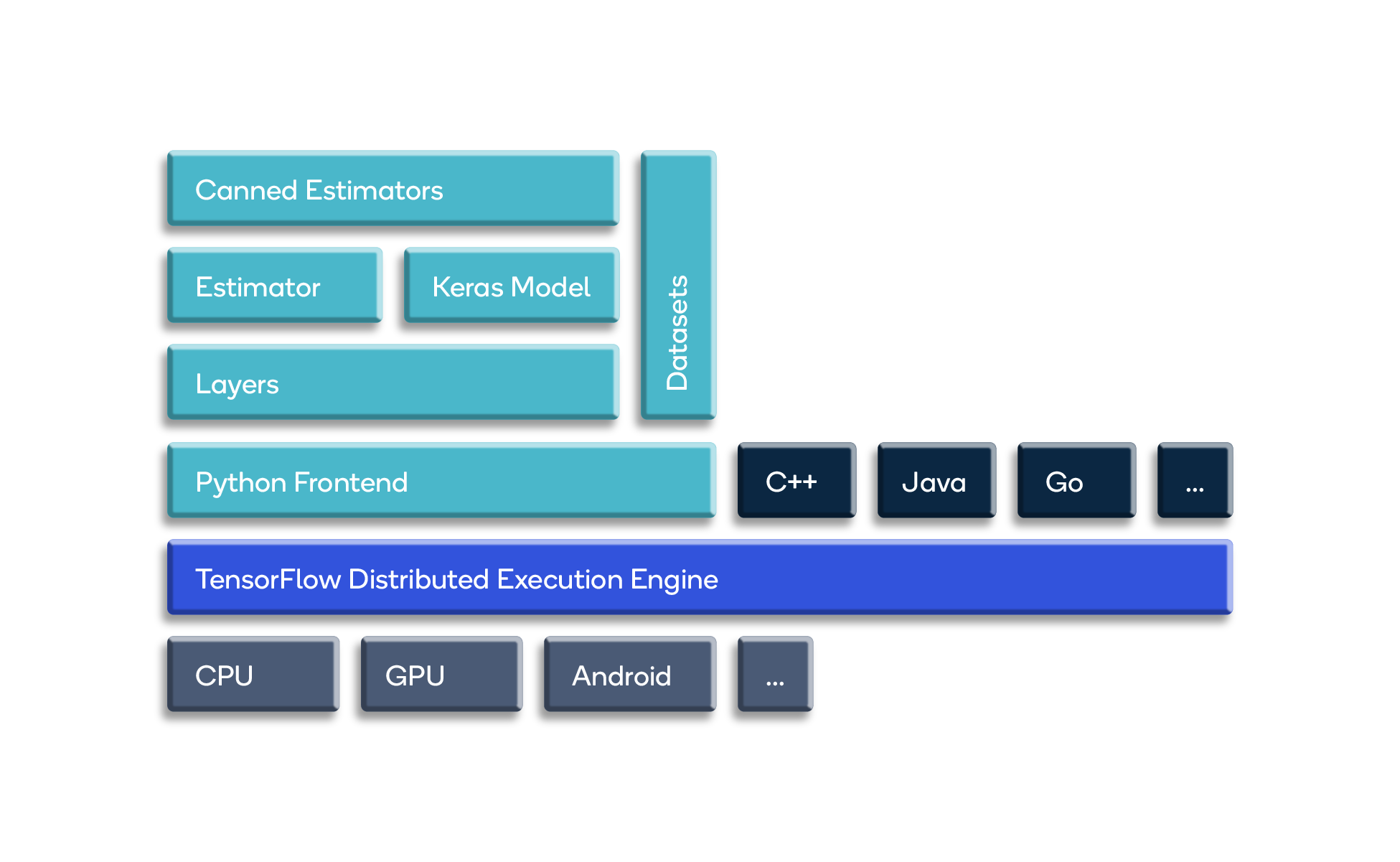

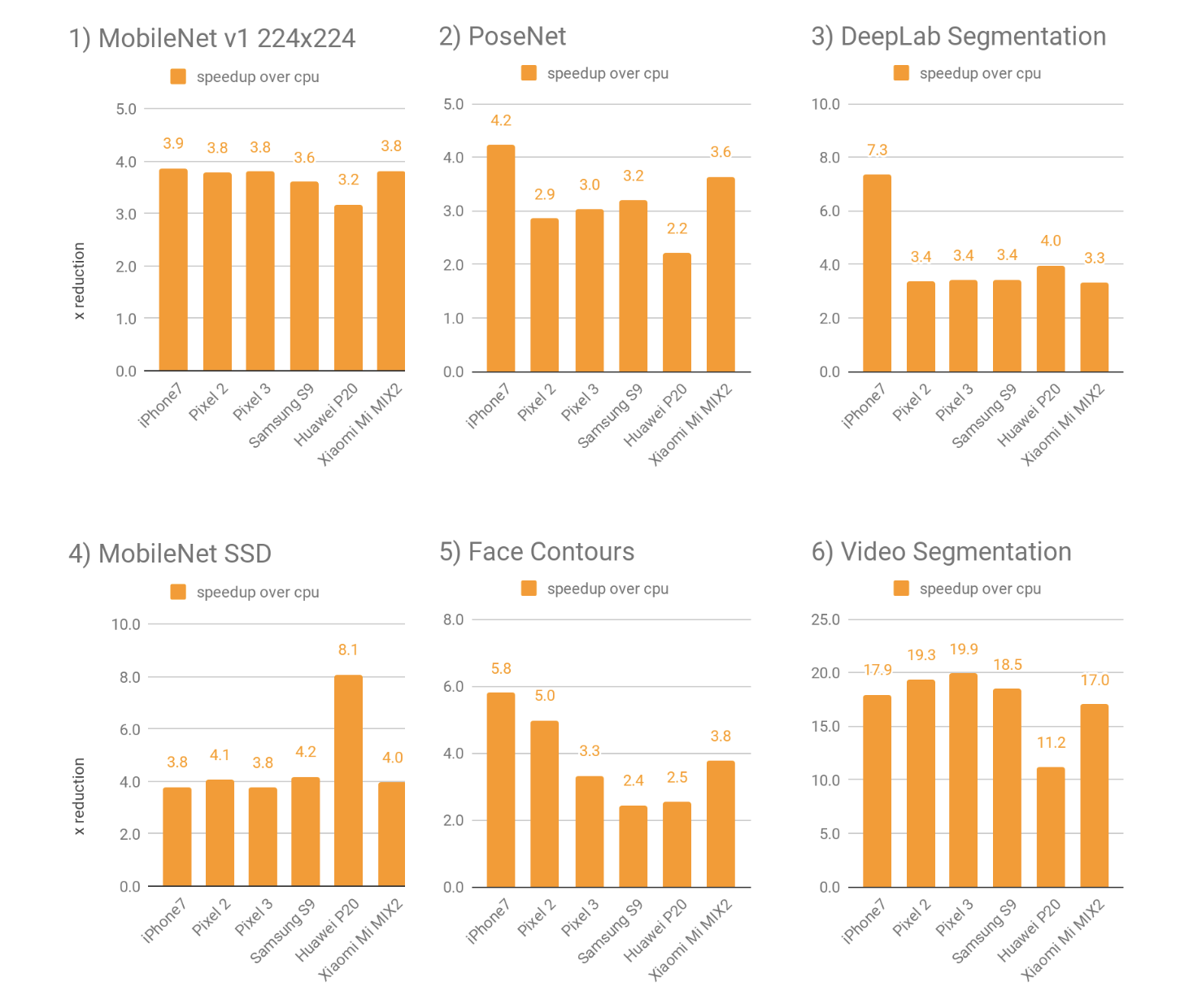

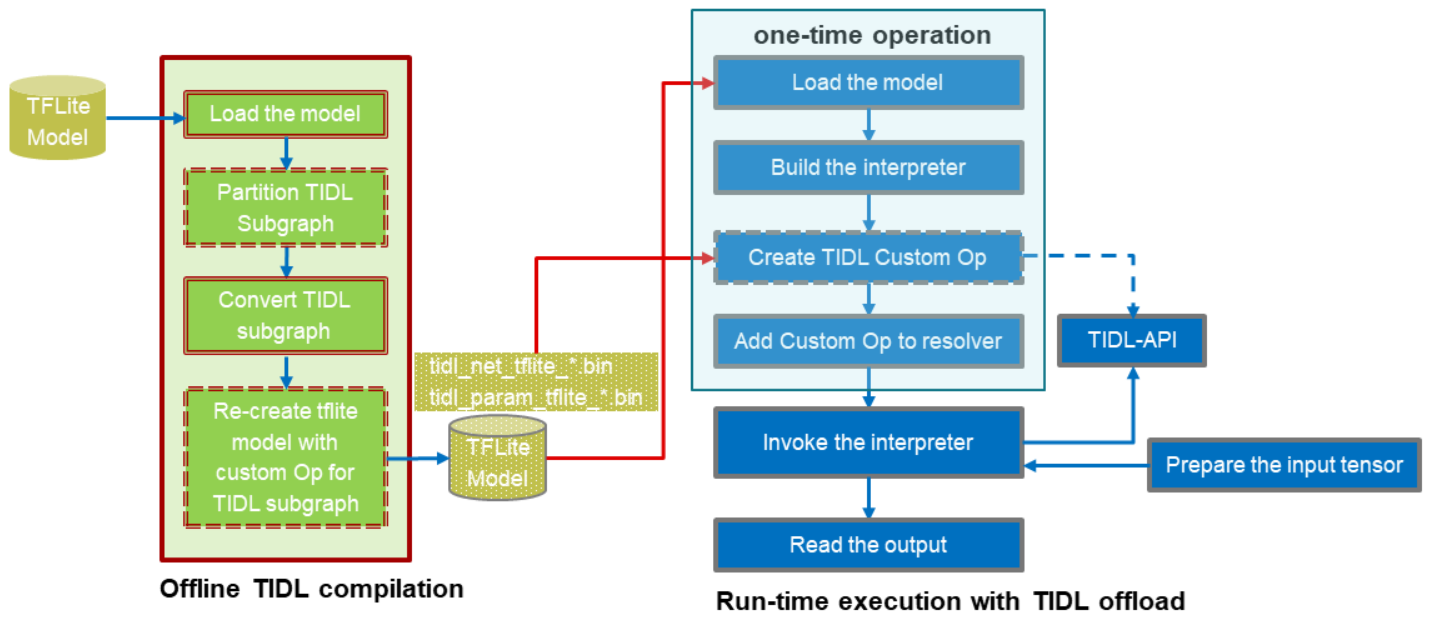

Everything about TensorFlow Lite and start deploying your machine learning model - Latest Open Tech From Seeed

/filters:no_upscale()/news/2019/11/tensorflow-lite-edge-qconsf/en/resources/1Tensorflow%20lite%201-1574277892121.png)